Technology

Does YouTube radicalize users? This study says not —but it’s deeply flawed.

A new study of YouTube’s algorithm attracting this weekend claims that the online video giant “actively discourages” radicalization on the platform. And if that sounds suspect to you, it should.

The flies in the face of everything we know about YouTube’s recommendation algorithm. There has been plenty of evidence that it pulls users down a rabbit hole of extremist content. A 2018 study of videos recommended to political viewers during the 2016 election found that an overwhelming majority were pro-Trump. Far-right allies of the authoritarian Jair Bolsonaro in Brazil say he and they wouldn’t have won election without YouTube.

Now here comes Australian coder and data scientist Mark Ledwich, who conducted this new study along with UC Berkeley researcher Anna Zaitsev. The pair at 768 different political channels and 23 million recommendations for their research. All of the data was pulled from a fresh account that had never viewed videos on YouTube.

2. It turns out the late 2019 algorithm

*DESTROYS* conspiracy theorists, provocateurs and white identitarians

*Helps* partisans

*Hurts* almost everyone else.? compares an estimate of the recommendations presented (grey) to received (green) for each of the groups: pic.twitter.com/5qPtyi5ZIP

— Mark Ledwich (@mark_ledwich) December 28, 2019

In a , Ledwich presents some of their findings using oddly emotive language:

“It turns out the late 2019 algorithm

*DESTROYS* conspiracy theorists, provocateurs and white identitarians.

Let’s break down why the study doesn’t measure up.

1. It’s woefully limited

The first problem: the study ignores prior versions of the algorithm. Sure, if you’re using the “late 2019” version as proof that YouTube “actively discourages” radicalization now, you may have a point. YouTube has spent the year tweaking its algorithm in response to the evidence that the platform was recommending extremist and conspiratorial content. The company this clean-up plan early in 2019.

But in a followup tweet, Ledwich says his study “takes aim” at the New York Times, in particular tech reporter Kevin Roose, “who have been on myth-filled crusade vs social media.”

“We should start questioning the authoritative status of outlets that have soiled themselves with agendas,” Ledwich continues — ironically, after having announced an agenda of his own.

4. My new article explains in detail. It takes aim at the NYT (in particular, @kevinroose) who have been on myth-filled crusade vs social media. We should start questioning the authoritative status of outlets that have soiled themselves with agendas.https://t.co/bt3mMscJi6

— Mark Ledwich (@mark_ledwich) December 28, 2019

Ledwich’s problem appears to be with‘s The Making of a YouTube Radical. The story’s subject, Caleb Cain, started being radicalized by YouTube video recommendations in 2014. Therefore, nothing about the 2019 YouTube algorithm debunks this story. The barn door is open, the horse has bolted.

Cain represents countless individuals who are now subscribed to extremist or conspiracy theory-related content. Creators publishing this content have had a five-year (or more) head start. They’ve already benefited from the old recommendation algorithm in order to reach hundreds of thousands of subscribers.

Roose hit back against Ledwich in a lengthy thread:

The only people who have the right data to study radicalization at scale work at YouTube, and they have made changes in 2019 they say have reduced “borderline content” recs by 70%. Why would they have done that, if that content wasn’t being recommended in the first place?

— Kevin Roose (@kevinroose) December 29, 2019

2. It’s clearly slanted

The second problem has to do with the subjective and highly suspect way Ledwich and Zaitsev have grouped YouTube channels. He has CNN categorized as “Partisan Left,” no different than, say, left-wing YouTube news outlet The Young Turks.

The study described channels in this category as a “exclusively critical of Republicans” and “would agree with this statement: ”GOP policies are a threat to the well-being of the country.””

This is, of course, self-evidently ridiculous. CNN is a mainstream media outlet which employs many former Republican politicians and members of the Trump administration as on-air contributors. It is often criticized, most notably by one of its former anchors, for allowing these commentators to spread falsehoods unchecked.

Naming CNN as “partisan left” betrays partisanship at the root of this study.

3. It doesn’t get YouTube

A third major problem: the researchers appear to not fully understand how YouTube works for regular users.

“One should note that the recommendations list provided to a user who has an account and who is logged into YouTube might differ from the list presented to this anonymous account,” the study says. “However, we do not believe that there is a drastic difference in the behavior of the algorithm.”

The researchers continue: “It would seem counter-intuitive for YouTube to apply vastly different criteria for anonymous users and users who are logged into their accounts, especially considering how complex creating such a recommendation algorithm is in the first place.”

That is an incorrect assumption. YouTube’s algorithm works by looking at what a user is watching and has watched. If you’re logged in, the YouTube algorithm has an entire history of content you’ve viewed at its disposal. Why wouldn’t it use that? It’s not just video-watching habits that YouTube has access to, either. Since YouTube accounts are connected to a Google account, simply being logged into any of Google’s services — such as, um, search — means you’re logged into YouTube as well.

There are other complex factors at play. Every time you hit “subscribe” on a YouTube channel, it affects what the algorithm recommends you to watch.

Any user can test out whether being logged in to their YouTube account matters on their own and debunk this claim. Being logged into an account versus being an anonymous user makes a major difference to the algorithm, as other researchers of YouTube radicalization have pointed out.

Let’s not forget: the peddlers of extreme content adversarially navigate YouTube’s algorithm, optimizing the clickbaitiness of their video thumbnails and titles, while reputable sources attempt to maintain some semblance of impartiality. (None of this is modeled in the paper.)

— Arvind Narayanan (@random_walker) December 29, 2019

If you’re wondering how such a widely discussed problem has attracted so little scientific study before this paper, that’s exactly why. Many have tried, but chose to say nothing rather than publish meaningless results, leaving the field open for authors with lower standards.

— Arvind Narayanan (@random_walker) December 29, 2019

As experts in the field will tell you, it is extremely difficult to produce reliable, quantitative studies on YouTube recommendation radicalization for these very reasons. Every account will produce a different result based on each user’s personal viewing habits. YouTube itself would have the data necessary to effectively pursue accurate results. Ledwich does not.

We may never truly know the magnitude of YouTube radicalization. But we do know that this study completely misses the mark.

-

Business6 days ago

Business6 days agoLangdock raises $3M with General Catalyst to help businesses avoid vendor lock-in with LLMs

-

Entertainment5 days ago

Entertainment5 days agoWhat Robert Durst did: Everything to know ahead of ‘The Jinx: Part 2’

-

Entertainment5 days ago

Entertainment5 days agoThis nova is on the verge of exploding. You could see it any day now.

-

Business5 days ago

Business5 days agoIndia’s election overshadowed by the rise of online misinformation

-

Business5 days ago

Business5 days agoThis camera trades pictures for AI poetry

-

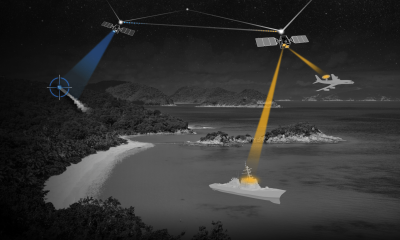

Business5 days ago

Business5 days agoCesiumAstro claims former exec spilled trade secrets to upstart competitor AnySignal

-

Entertainment7 days ago

Entertainment7 days agoDating culture has become selfish. How do we fix it?

-

Business7 days ago

Business7 days agoScreen Skinz raises $1.5 million seed to create custom screen protectors